When working on client projects I come across copious amounts of source code, which sometimes is very well-maintained, sometimes less so. The various code bases are as diverse as their owners and respective stakeholders:

A few come with an inherent sense of quality, lots of unit tests serving as the specification for the product. They’re typically delightful to maintain and extend.

Some though at times leave the impression of having been cobbled together in a rather haphazard, impromptu manner in order to solve an urgent problem with no time to devise a ‘proper’ software architecture or model. This isn’t bad per se, mind you. In fact, I presume there are just as many software projects that failed horribly due to over-engineering as there are duct-tape solutions based on Microsoft Excel that started their lives as a ‘prototype’ (and everybody knows that nothing lasts as long as a prototype in production …).

Most software products however, fall in-between those extremes: Mostly comprehensible source code, average test coverage, some weird stuff nobody on the current team really understands anymore but an overall low WTFs / minute ratio.

Always pursuing a constant improvement in code quality is a vital aspect of delivering a software product. It’s not the so much the absolute, objective code quality that matters – if such a thing does even exist – it’s the improvement process and the relative improvement achieved over time. In other words, a software project in a dismal state of quality isn’t a lost cause at all but a huge opportunity for changing it for the better.

Given this, it should come as no surprise that apart from commonplace (nowadays, that is … I remember a time long ago when production websites weren’t checked in and built via source code management tools but edits were made live on the production environment with FTP …) measures for ensuring software quality such as continuous deployment, I’m highly interested in new approaches that help you track down software bugs.

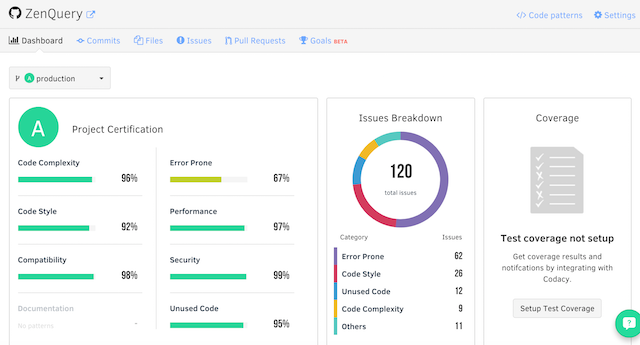

At Web Summit 2015 I met the founders of Codacy. Codacy is an automated code review tool that according to the website allows you to ship better code, faster. Codacy provides you with extensive static analysis, code coverage and complexity information for each commit. I’ve tried it out with some of my own open source projects, particularly ZenQuery, and I was quite impressed with results. While the overall code quality of ZenQuery doesn’t seem to be so bad, Codacy uncovered a few issues that could be improved upon:

Another similar product is Scrutinizer, which at first glimpse seems to be quite capable, too. I haven’t tested it yet, though, so I’ll have to leave that for you to try out yourselves.

Anyway, no matter if you’re using sophisticated tools such as the two mentioned above or just plain source code management, unit testing and continuous integration, the gist of this post is: Good software quality matters, improving software quality matters even more!

English

English